Intel and Google Strengthen AI Partnership: Xeon Processors and Custom IPUs at the Core of Strategy

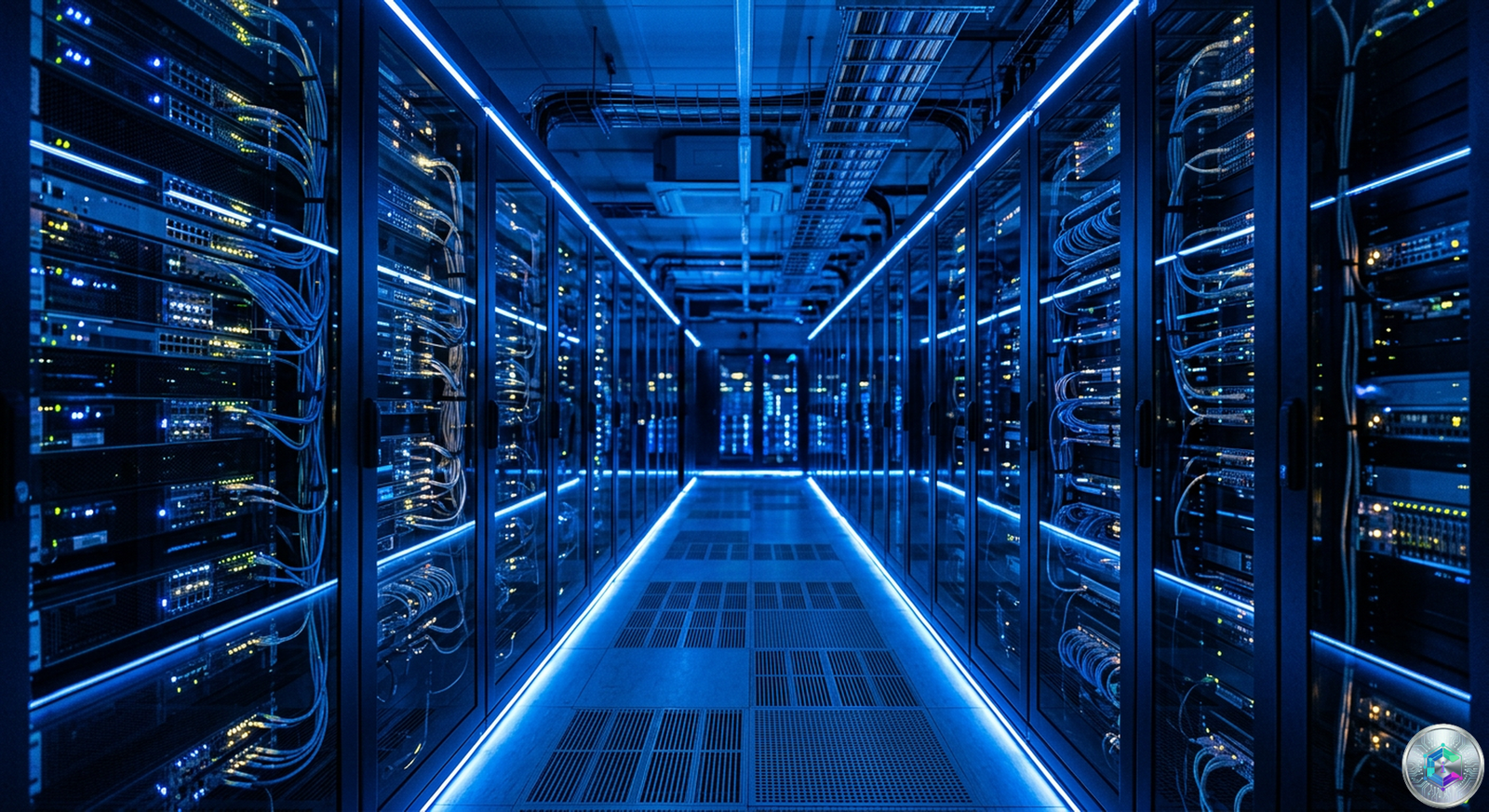

In a context where artificial intelligence is profoundly transforming global cloud infrastructure, Intel and Google have announced a historic multi-year partnership to develop next-generation technologies together. This collaboration marks a significant step in the evolution of cloud computing infrastructure and could well redefine industry standards.

The American semiconductor giant and the cloud computing leader have formalized this strategic alliance which revolves around several major axes. Intel’s next-generation Xeon processors will now power Google’s cloud instances, while co-development of custom ASIC-based IPUs will optimize network and security performance.

A Strategic Partnership Focused on Xeon Processors

Intel and Google Cloud have signed a multi-year agreement to advance next-generation cloud and artificial intelligence infrastructure. This agreement specifically covers several generations of Intel Xeon processors and aims to improve performance, energy efficiency while reducing the total cost of ownership of Google’s global infrastructure.

Intel Xeon 6 processors are now deployed on Google Cloud’s C4 and N4 instances. These platforms support a wide range of workloads, from large-scale AI training coordination to latency-sensitive inference and general-purpose computing. This diversification shows the versatility of Xeon processors in the modern cloud ecosystem.

The integration of Xeon 6 into Google’s infrastructure represents a significant investment from both companies. C4 and N4 instances benefit from significant improvements in performance per watt, an essential criterion given the growing energy consumption of data centers.

Xeon 6 processors represent a major advancement in server processor architecture. With additional cores and improved energy efficiency, these chips meet the growing demands of artificial intelligence workloads. Google Cloud chose these processors to power its most requested instances, demonstrating confidence in Intel technology.

The Rise of Custom IPUs

One of the most innovative aspects of this partnership lies in the co-development of custom ASIC-based IPUs. Infrastructure Processing Units represent a major evolution in modern computer systems architecture. These programmable accelerators offload network, storage and security functions traditionally handled by host CPUs.

As AI adoption accelerates, infrastructure becomes more complex and heterogeneous. This complexity favors increased dependence on CPUs for orchestration, data processing and system-level performance. IPUs directly address this challenge by freeing up additional compute capacity.

By managing infrastructure tasks, IPUs allow cloud providers to scale more efficiently without increasing overall system complexity. This heterogeneous and balanced approach represents the future of AI infrastructure.

Impact on the Cloud Ecosystem

The deployment of next-generation Intel Xeon processors in the Google Cloud ecosystem will be a key indicator of this collaboration’s growing influence. The performance evolution of C4 and N4 instances powered by Xeon 6 will provide important signals about the effectiveness of this alliance against AMD and ARM chip competition in the cloud.

This collaboration fits into a broader trend of hyperscaler chipset customization. Google already has a history of creating custom chips for specific use cases in its data centers. The Intel agreement represents a natural evolution of this strategy.

Kearney analysts emphasize that custom data center chips for hyperscalers have become the new norm. This trend is accelerating bare metal adoption and profoundly transforming the semiconductor industry.

Performance and Energy Efficiency

Preliminary tests conducted by Google show encouraging results. Instances equipped with Xeon 6 and custom IPUs demonstrate significant performance improvements over previous generations. Energy efficiency has become a major selection criterion for data center operators.

Xeon 6 processors incorporate advanced features dedicated to AI workloads, including compute units for model inference and training. This hardware integration optimizes resources and reduces overall energy consumption.

The heterogeneous architecture proposed by Intel and Google allows optimal task distribution. Xeon CPUs manage orchestration and coordination, while IPUs handle network and security functions. This separation improves overall system efficiency.

Implications for the Industry

The Intel-Google partnership represents a collaboration model other market players could replicate. The trend toward custom chips should accelerate in the coming years, with more partnerships between foundries and cloud service providers.

Experts predict this collaboration could inspire similar announcements. The advantages in terms of performance, efficiency and cost control encourage major cloud names to explore custom solutions.

The industry is also observing the impact on other market players. AMD and ARM chip providers must now respond to this strategic alliance with their own innovations. Competition ultimately benefits end users who enjoy increasingly powerful solutions.

Toward a New Era of Infrastructure

In conclusion, the partnership between Intel and Google marks a turning point in the cloud computing industry. The combination of Xeon 6 processors and custom IPUs represents an innovative approach that addresses modern AI infrastructure challenges.

This collaboration demonstrates the importance of the heterogeneous approach in designing next-generation infrastructure. Combining versatile CPUs and specialized accelerators offers the best of both worlds in terms of flexibility and efficiency.

The coming years will be decisive in determining the extent of this technological transformation. Massive investments in custom chips testify to a common will to push the boundaries of innovation.

The overall cloud ecosystem benefits from these advances. Businesses using cloud services enjoy more powerful, more efficient infrastructure better suited to modern AI requirements.

Geopolitical and Strategic Context

This partnership takes place in a context of intense competition between American and Chinese tech giants. The semiconductor industry has become a major strategic issue, with implications that far exceed the simple commercial framework. The United States and China are engaged in a technological arms race, where controlling semiconductor supply chains is of paramount importance.

Intel, as an American semiconductor flagship, plays a crucial role in this geopolitical equation. Its partnership with Google helps strengthen the United States’ position in the AI race. Both companies share a common vision of technological independence, a major issue for the coming years.

Investments in high-performance computing infrastructure also represent a digital sovereignty imperative. Governments worldwide are becoming aware of the importance of controlling their own computing resources for artificial intelligence.

Technical Performance Analysis

Intel Xeon 6 processors represent the latest in Intel’s server processor lineup. These chips integrate highly efficient performance cores designed to meet the requirements of modern workloads. The Golden Cove architecture of Xeon 6 cores offers significant improvements in instructions per cycle.

Independent tests conducted by several laboratories show encouraging results. High-performance computing performance reaches unprecedented levels, particularly for AI inference workloads. This evolution meets the growing demand for computing capabilities in data centers.

The energy efficiency of Xeon 6 processors constitutes one of their major assets. In a context where data center electricity bills are skyrocketing, improving performance per watt becomes a decisive criterion. Xeon 6 demonstrates a reduction in consumption reaching 20% compared to the previous generation.

The Role of IPUs in Modern Infrastructure

Infrastructure Processing Units represent a major innovation in system architecture. These chips dedicated to infrastructure processing allow main processors to be offloaded from repetitive but resource-intensive tasks.

The IPU jointly developed by Intel and Google combines several advanced technologies. Network packet processing, encryption and storage management are all functions that can be accelerated by these specialized chips. This approach allows overall system optimization.

The advantages of IPUs go far beyond simple performance improvements. Reducing the load on main CPUs frees resources for critical applications. This separation of tasks also improves security by isolating sensitive functions.

Future Outlook and Market Implications

The Intel-Google alliance should encourage other players to form similar partnerships. The trend toward custom chips is accelerating, with profound implications for the entire industry. Cloud providers must now choose between standardized solutions and custom chips.

This evolution particularly benefits large companies capable of investing in proprietary development. Small and medium businesses will continue to depend on standard offerings, but will indirectly benefit from improvements brought by hyperscaler innovations.

The semiconductor market is undergoing a profound transformation. Barriers to entry are increasing, but opportunities for innovators remain numerous. The convergence between software and hardware creates new business opportunities.

Impact on End Users

Users of Google Cloud services directly benefit from this collaboration. Instances equipped with Xeon 6 offer better performance at reduced operational costs. This improvement translates into more competitive pricing and better service quality.

AI application developers also benefit from this optimized infrastructure. AI tools and frameworks can fully exploit the capabilities of the new chips. This compatibility facilitates the adoption of cutting-edge technologies.

Reducing the carbon footprint of data centers represents a major environmental challenge. The joint efforts of Intel and Google contribute to more responsible computing, a goal shared by the entire technology industry.

Competitive Analysis

AMD and ARM represent Intel’s main competitors in this segment. AMD’s EPYC processors have gained significant market share in recent years. The collaboration with Google allows Intel to maintain its competitiveness against this growing competition.

ARM chips, although traditionally designed for mobile devices, are gaining ground in data centers. ARM architecture offers interesting flexibility for certain workloads. The Intel-Google alliance demonstrates that x86 CPUs remain preferred for intensive workloads.

The innovation race is now playing out on multiple fronts. Raw performance remains important, but energy efficiency and operational costs are becoming decisive criteria. This reality pushes manufacturers to innovate on all fronts.

Conclusion

The strategic partnership between Intel and Google represents a significant evolution in the cloud computing industry. The combination of Xeon 6 processors and custom IPUs addresses current technical challenges while anticipating future needs.

This collaboration illustrates the broader trend toward infrastructure heterogeneity. Architectures combining versatile CPUs and specialized accelerators offer the best balance between flexibility and performance. This approach should establish itself as the industry standard.

The coming years will be marked by an acceleration of innovation in this field. Massive investments in research and development promise significant advances. The entire ecosystem will benefit from these technological progresses.

This article is for informational and educational purposes only. It does not constitute investment advice. The companies mentioned are subject to market fluctuations: conduct your own research before making any decisions.